- Home

- About

- Contact

- My horse and me 2 the game

- Max payne 2 mobile game free download

- Pdf xchange viewer mac

- Smart serial dte vs dce

- Fake app porn

- Notorious big funeral

- Google search engine google search bar download google search engine

- Age mythology ign

- Survival heroes pc alternative

- Adobe audition cs6 price

- Atlanta heretic

- Dk donkey kong

- Frp unlock tool november 2018

- Satta king desawar 2018

- Download brian lara cricket 2007 for pc full version

- Cooking academy 2 pc cd-rom

- Sql prompt professional

- Pcsx2 screen tearing

If you don’t have a powerful GPU, good luck finding one for a reasonable price.

FAKE APP PORN DOWNLOAD

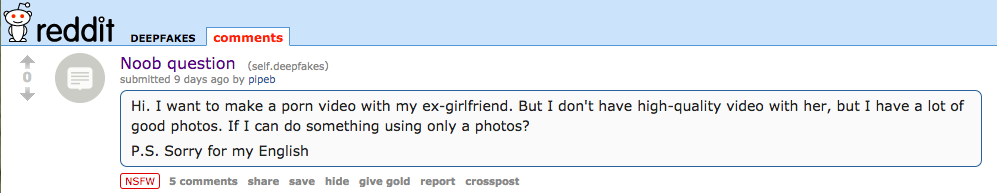

You need to download and configure Nvidia’s CUDA framework to run the TensorFlow code, so the app requires a GeForce GPU. The tool is available for download and mirrored all over the internet, but setup is non-trivial. Well, this is the internet, so no surprise there.įakeApp is based on work done on a deep learning algorithm by a Reddit user known as Deepfakes.

And of course, they’re using it to make porn.

Machine learning has become so advanced that a handful of developers have created a tool called FakeApp that can create convincing “face swap” videos. We already have impressive object recognition in photos and speech synthesis, and in a few years cars may drive themselves. On its website and social media, DeepNude says the team "did not expect these traffic and our servers need reinforcement," and is working to get the app back online "in a few days."īut people have already downloaded the software, and this could very well mark the beginning of incredibly easy access to technology with terrifying implications.Many of the most magical pieces of consumer technology we have today are thanks to advances in neural networks and machine learning. "We as a scientific community should engage in serious discussion on how best to move our field forward while putting reasonable safeguards in place to better ensure that we can benefit from the positive use-cases while mitigating abuse."īusiness Insider was unable to test out the app ourselves, because the DeepNude servers are offline. "We have seen some wonderful uses of our work, by doctors, artists, cartographers, musicians, and more," MIT professor Phillip Isola, who helped create pix2pix, told Business Insider in an email. The team behind pix2pix, a group of computer science researchers, called DeepNude's use of their work "quite concerning." Deepfake technology has already been used for revenge porn targeting anyone from people's friends to their classmates, in addition to fueling fake nude videos of celebrities like Scarlett Johansson.Īs Johansson experienced firsthand last year when her face was superimposed into porn videos, it doesn't matter how much you deny that the nude footage isn't actually of you. So while deepfake tech has serious implications for the spread of false news and disinformation, DeepNude shows how quickly the technology has evolved to make it ever-easier for non-technically savvy people to create realistic-enough content that could then be used for blackmail and bullying purposes, especially when it comes to women. Read more: From porn to 'Game of Thrones': How deepfakes and realistic-looking fake videos hit it big

FAKE APP PORN SOFTWARE

The app software will do the rest for you and layer your face on top of the original face used in the GIF. That means snapping a picture of your own face, and pasting it onto a GIF reaction image. But others have used the technology to effortlessly spread misinformation, like this deepfake video of Alexandria Ocasio-Cortez, which was altered to make the senator seem like she doesn't know the answers to questions from an interviewer. The popular app Morphin enables you to utilize the GIF reactions that you can access through the keyboard function on your phone and make them your own. Some have used the technology to create computer-generated cats, Airbnb listings, and revised versions of famous Hollywood movies. The software only generates doctored images, not videos, of women, but it's the low barrier to entry that makes the app problematic.ĭeepNude is just the latest example in how techies have been using artificial intelligence to create deepfakes, eerily realistic fake depictions of someone doing or saying something they have never done.

FAKE APP PORN SOFTWARE DOWNLOAD

The website sells access to a premium version of the software for $50 that removes the watermark, and requires a software download that's compatible with Windows 10 and Linux devices.

FAKE APP PORN FREE

Up until now, most deepfake technology and software requires uploading vast amounts of video footage of the subject in order to train the AI to create realistic-looking - yet false - depictions of the person saying or doing something.īut DeepNude, which was first discovered by Motherboard, makes generating fake nude images a one-click process: All someone would have to do is upload a photo of any woman (it reportedly doesn't generate male nudes), and let the software do the work.Īll the images created with the free version DeepNude are produced with a watermark by default, but Motherboard was able to easily remove it to get the un-marked image. A new web app that lets users create realistic-looking nude images of women offers a terrifying glimpse into how deepfake technology can now be easily used for malicious purposes like revenge porn and bullying.